All in One View

Content from Introduction

Last updated on 2026-02-25 | Edit this page

Estimated time: 15 minutes

Background

Writing software is an integral component of the research activities of many astronomers. Increasingly, such software is written in collaborative efforts involving multiple researchers across multiple institutions. Despite this, training for astronomy students and early career researchers in best practices for collaborative software development is often informal and fails to make use of software industry knowledge. The goal of this course is to fill this training gap.

In this course you will be guided through the development of a software package, beginning at proof of concept, and finishing with a project that is findable, accessible, interoperable, reusable (FAIR), and citable by others. This course is presented in lessons which represent development cycles. Each cycle will be another step along the path of making code that you are proud of and can be recognised for.

You can engage with this course as a reading assignment but we recommend that you follow along at home by applying all the lessons learned to your own software project. This course is designed with collaborative software development in mind. Throughout the course there will be self paced ungraded activities to complete that will be marked as either SOLO or GROUP activities. If you are joining this course as part of a group wishing to improve your collaborative software development skills then you should aim to complete all the activities. If you are joining this course on your own then some of the group activities will not be relevant to you and you can skip them as appropriate.

At the beginning of this course you will be taken through a typical research driven development phase. In this phase we will create a proof of concept code which we then build on throughout the course. This project is extremely simple in scope as it intended to be a minimum working example that we can use to demonstrate various development techniques. Many of the examples in this course will refer to the example code. If you have an existing software project we recommend that you use that project when doing the various activities as you will encounter more issues, learn more techniques, and the solutions to the activities will be directly usable and beneficial to you.

Assumed knowledge and software requirements

This course assumes that you have basic proficiency in python. While python is the language that we’ll be working with primarily, most of the lessons are applicable to any language.

A large part of working in a group will involve keeping track of changes to a shared code base. For this we will be using the git version control system, and in particular we will be using GitHub as the remote repository.

Software requirements:

- Python 3.8 +

- A integrated development environment (IDE) or text editor of choice

- We recommend PyCharm or Visual Studio Code

- An operating system which gives you a proper command line

- For windows this means using something like gitbash, WSL, or the Anaconda prompt

- For OSX or Linux the regular terminal will be fine

- git, either from the command line, using a git desktop app, or as an add on to PyCharm or VSCode.

- An account on GitHub is required for some of the activities.

Content from Coding Up A Proof Of Concept

Last updated on 2026-02-24 | Edit this page

Estimated time: 110 minutes

Overview

Questions

- Where/How do I start a new project?

- How do I use a function?

- How do I document and test my code?

- Can I make a command line interface for my code?

Objectives

- Build a minimal working or “proof of concept” project that we can build on in this workshop

Beginning a new software project

Before we do anything to our project we should talk about:

Organisation

Organisation is key to a good project. Every time you start a new project or explore a new idea it is a good idea to create a new space for that project. This means creating a new directory for you to collect all the relevant data, software, and documentation. You will be involved in many projects through your career and often will have to manage multiple projects simultaneously. It is therefore not just a good idea to organise each project, but to have a consistent organisation structure between projects. In this section we will make some recommendations for organising a software project.

Put each project in its own directory, which is named after the project.

The location of this directory will depend on a higher level organisation scheme. For example you may separate your projects based on funding, based on collaboration, or based on research area.

Within you software project directory we recommend the following structure:

OUTPUT

.

├── README.md <- Description of this project

├── bin <- Your compiled code can be stored here (not tracked by git)

├── config <- Configuration files, e.g., for doxygen or for your model if needed

├── data

│ ├── external <- Data from third party sources.

│ ├── interim <- Intermediate data that has been transformed.

│ ├── processed <- The final data sets for analysis.

│ └── raw <- The original, immutable data dump.

├── docs <- Documentation, e.g., doxygen or reference papers (not tracked by git)

├── notebooks <- Jupyter or R notebooks

├── reports <- For a manuscript source, e.g., LaTeX, Markdown, etc., or any project reports

│ └── figures <- Figures for the manuscript or reports

└── src <- Source code for this project

├── external <- Any external source code, e.g., pull other git projects libraries

└── tools <- Any helper scripts go hereSetting up an empty structure such as the above can be done either by

making an template and then copying that every time you start a new

project. Additionally there are python packages such as

cookiecutter (pypi, rtfd, github)

that can automate this process for you, and offer a range of templates

to work with.

Name all files to reflect their content or function.

It is also convenient to use a consistent and descriptive naming

format for all your files and sub-folders. For example, use names such

as galaxy_count_table.csv, manuscript.md, or

light_curve_analysis.py. Do not using sequential numbers

(e.g., result1.csv, result2.csv) or a location

in a final manuscript (e.g., fig_3_a.png), since those

numbers will almost certainly change as the project evolves (and are

meaningless on their own).

Starting our project

Now we are ready to actually start doing something with our project.

Project evolution

In the typical project cycle for an astronomer or research software engineer (or RSE, a formal name for people who combine professional software expertise with an understanding of research), you will not sit down and have a detailed discussion about what the project is, where it needs to go, what the user stories and milestones are, and who will be involved. Usually research evolves organically through informal discussions with colleagues, or a sudden thought in the shower. Similarly our software projects evolve in an organic manner, often beginning with a small script of function to do just this one thing, which then over time gets used, reused, augmented, shared, and thus evolves into a software project. This evolution of ideas and code does not fit will with much of the more formal structures that professional software developers adhere to, and so we will not try to fit our projects to such a scheme. Instead we will create a path for our software that will be suited to our work style, but which draws on the knowledge and experience of professional software developers. Thus we will begin with a proof of concept code – a short bit of work that proves that something works using the minimal amount of effort.

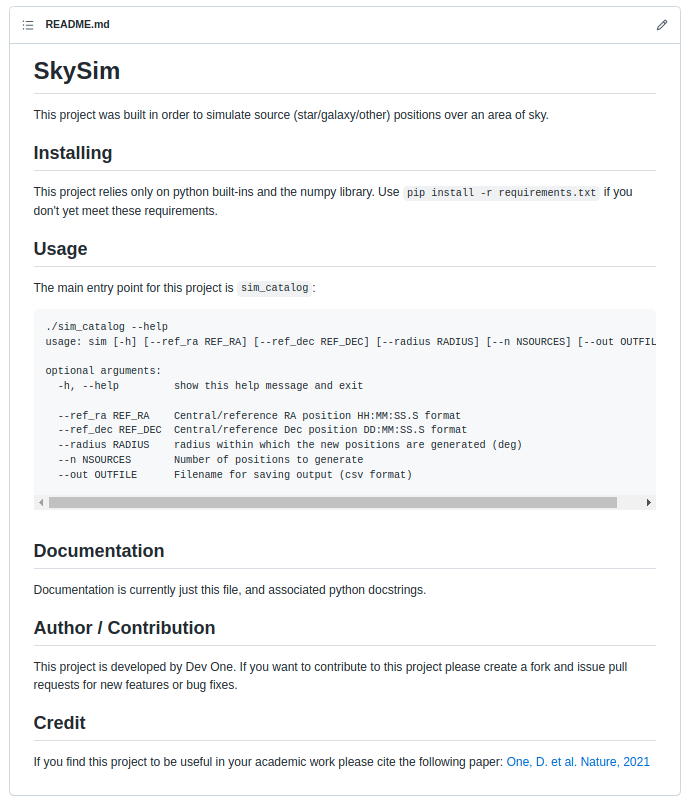

Example project

The example project that we will work with will involve tasks that are familiar to many astronomers. The work that is being done is mostly for demonstration purposes – existing libraries will be able to do this task faster and easier that we will. The point of this example project is not the content, but the methodology that we use as we pass through various cycles of development.

Example project: an astronomy catalogue simulator

The example project that we will be developing will simulate a catalogue of foreground stars in the direction of the Andromeda galaxy. The initial requirements are as follows:

Stars should have randomised sky positions around the Andromeda galaxy Positions should fall within 1 degree of the central location Each star should have a unique ID The star ID and position should be saved in a csv file to be analysed by other programs This program is intended to be used by the developer and their research group which includes people who are not proficient python programmers.

It is intended that the software will grow in capability and complexity only as needed to support a current research project.

With this in mind we move to the first stage of our software project – the proof of concept.

SOLO Activity: Project structure

Consider the astronomy catalogue simulator project mentioned in the previous lesson.

Come up with a planned project structure that would be appropriate for this project. How much of the structure would change if you were to use a different language (e.g. Python vs C)? The scope of work is quite modest and could be achieved with a flat file structure or a single file. Which statements in the previous lesson suggest that a good project structure will be beneficial to future work?

Proof of concept code

The first iteration of our code is shown below. It was written in a stream-of-conscious mode with a focus on getting a minimum working example that proves that the work can be done.

PYTHON

#! /usr/bin/env python

# Demonstrate that we can simulate a catalogue of stars on the sky

# Determine Andromeda location in ra/dec degrees

import numpy as np

import math

# from wikipedia

ra = '00:42:44.3'

dec = '41:16:09'

d, m, s = dec.split(':')

dec = int(d)+int(m)/60+float(s)/3600

h, m, s = ra.split(':')

ra = 15*(int(h)+int(m)/60+float(s)/3600)

ra = ra/math.cos(dec*math.pi/180)

# make 1000 stars within 1 degree of Andromeda

ra_offsets = np.random.uniform(-1, 1, size=1000)

dec_offsets = np.random.uniform(-1, 1, size=1000)

ras = ra + ra_offsets

decs = dec + dec_offsets

# now write these to a csv file for use by my other program

with open('catalog.csv', 'w') as f:

print("id,ra,dec", file=f)

for i in range(1000):

print("{0}, {1:7.4f}, {2:7.4f}".format(i, ras[i], decs[i]), file=f)The above code was saved as sim.py. When run from the

command line it produces a file (catalog.csv) with a

header, one thousand rows, and columns showing the id/ra/dec of the

simulated points on the sky. Right now the code runs without error and

at first glance does what we need.

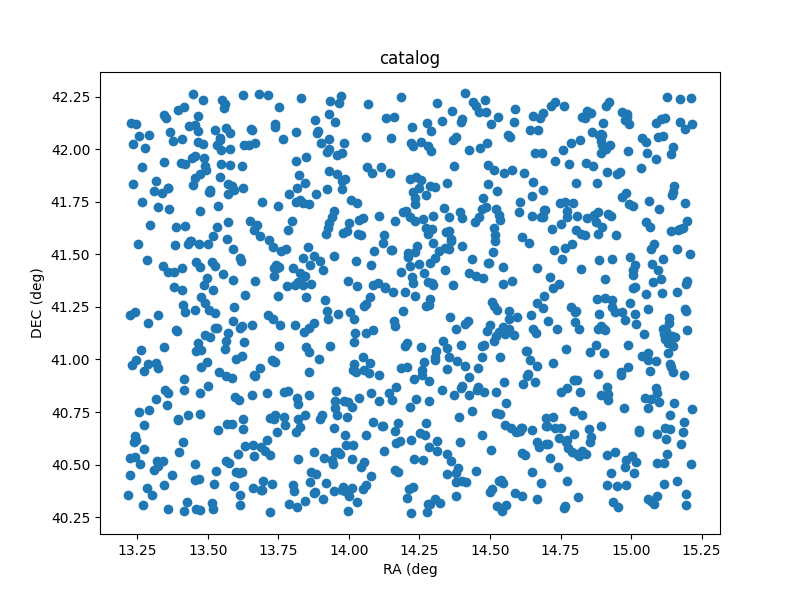

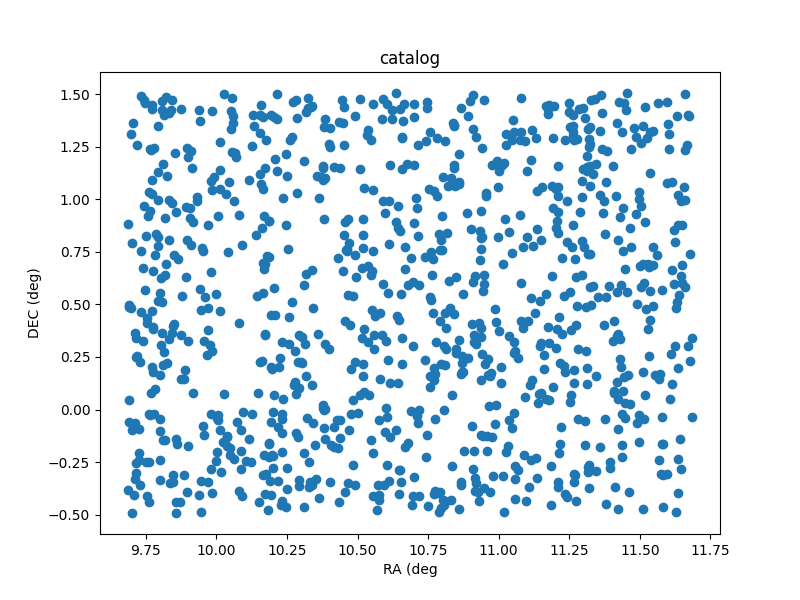

If we plot the ra/dec locations of the catalog we get the following output:

Sky plot of the catalog that comes from the sim.py program.

Making code reusable via functions

Now that we have proved to ourselves that it’s possible to generate a catalog, we want to tidy up the code a little so that it can be reused and adapted easily.

At the moment our intended audience is ourselves, so we are content

with keeping all the relevant information within the code itself. As a

first step we create new variables that record the target location

(ref_ra/ref_dec), the number of stars to generate

(nsources), and how far from the central location the new

positions should be generated (radius). This is done using

global variables at the top of our file like this:

PYTHON

#! /usr/bin/env python

# Demonstrate that we can simulate a catalogue of stars on the sky

# Determine Andromeda location in ra/dec degrees

import numpy as np

import math

# configuration using global variables

nsources = 1000

# from wikipedia

ref_ra = '00:42:44.3'

ref_dec = '41:16:09'

radius = 1From here we need to make a few changes to our code so that these variables are now used in place of the previously hard-coded values.

We then take all the code that is part of the position generation

stage and bundle it all together into a function which we call

generate_positions. This allows us to keep that part of the

code separate from the file writing stage. In fact while we are at it we

should make a function for doing the file writing. Lets call it

write_file. While we are writing these functions we can use

python docstrings to

document the intent of each function.

PYTHON

def generate_positions():

"""

Create `nsources` random locations within `radius` degrees of the reference `ref_ra`/`ref_dec`.

Returns

-------

ra, dec : numpy.array

Arrays of ra and dec coordinates in degrees.

"""

# convert DMS -> degrees

d, m, s = ref_dec.split(':')

dec = int(d)+int(m)/60+float(s)/3600

# convert HMS -> degrees

h, m, s = ref_ra.split(':')

ra = 15*(int(h)+int(m)/60+float(s)/3600)

ra = ra/math.cos(dec*math.pi/180) # don't forget projection effects

ra_offsets = np.random.uniform(-1*radius, radius, size=nsources)

dec_offsets = np.random.uniform(-1*radius, radius, size=nsources)

ras = ra + ra_offsets

decs = dec + dec_offsets

return ras, decs

def write_file(ras, decs):

"""

Write the ra/dec catalog to a file, and include a header and IDs.

"""

with open('catalog.csv', 'w') as f:

# creat a header row

print("id,ra,dec", file=f)

for i in range(nsources):

# use a csv format

print("{0}, {1:7.4f}, {2:7.4f}".format(i, ras[i], decs[i]), file=f)

returnNote that the first function takes no parameters, while the second is designed to take two lists of positions as input. By breaking our code into functional blocks we have a number of advantages:

We can reuse a block of code by calling the function multiple times, and don’t have to bother with duplicated code. Duplicated code means duplicated bugs! The code within each function will not interfere with code in other functions, allowing us to reuse names of variables, and for unused variables and memory to be deleted upon exiting the function. We can document each function separately using a docstring to describe the intent of the code. This is in addition to the inline comments that we have used.

Our script can now be finished with a few more lines of code.

As a developer the code is now separated into functional parts, so if something goes wrong or needs changing, we know where to look to make those changes. As a user of the code we can open the file and read the first few lines to see what the default parameters are, and modify them if we choose. Additionally, as a user we can read the names and docstrings of the functions to understand what the code is doing rather than having to read the code itself.

Now we have a piece of code that is easier to use. However, this code is only really usable as is, and requires people to read/edit the source code to understand how it works and adapt it for their use. In the next section we’ll see how to further generalise our code by using more function parameters, and adding a command line interface.

Creating a command line interface

In the previous lesson we saw how we could use functions to separate different tasks, and docstrings to describe the behaviour of these functions. In this lesson we’ll generalise our code even further by removing the global variables, adding functions parameters, and adding a command line interface.

The first thing that we will do is remove the global variables, and have these values passed to the functions directly. This will mean that someone reading the code for a function doesn’t have to hunt through the rest of the code to figure out what the global variables are.

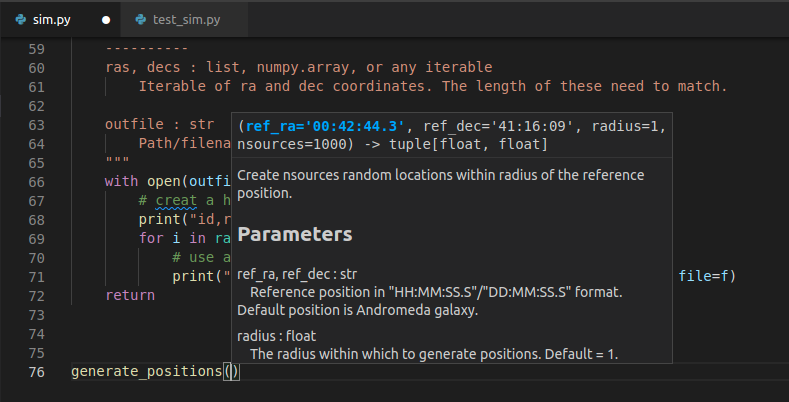

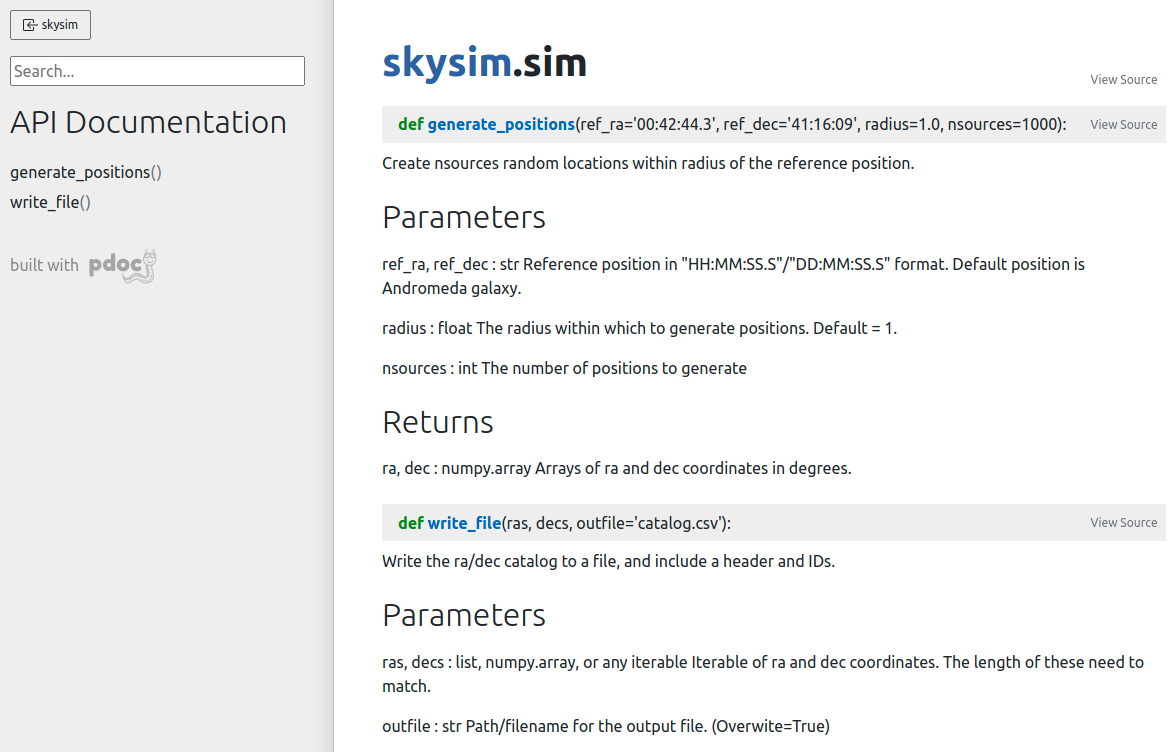

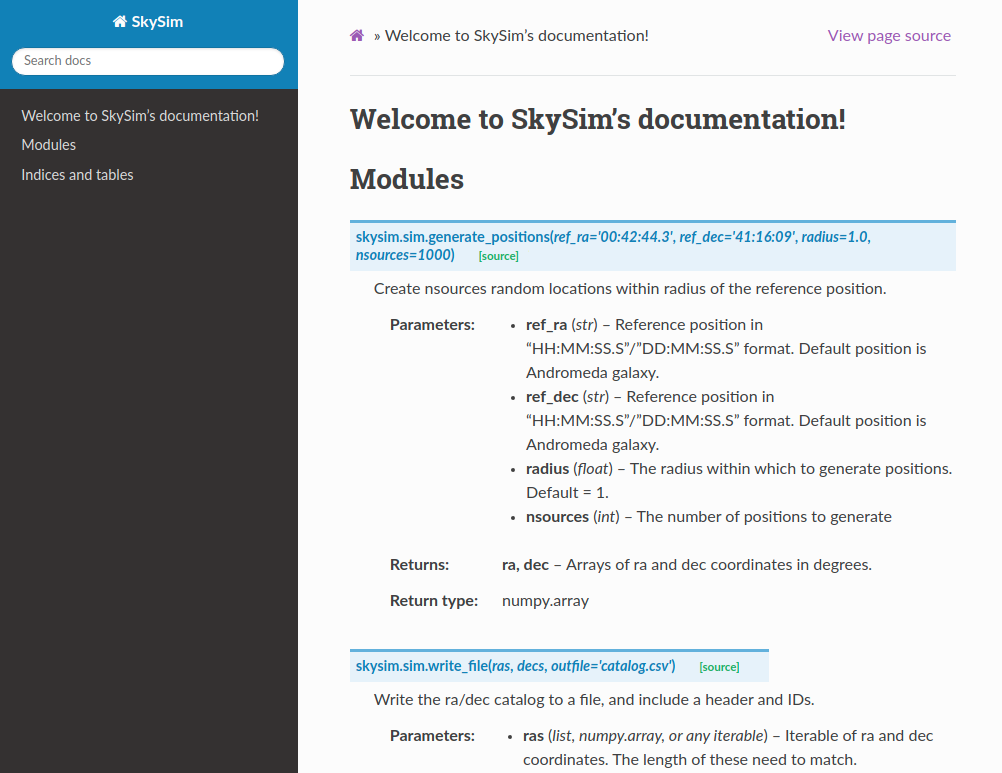

For the generate_positions function we can use

parameters with the same name as the previously existing global

variables, and we can set their default values to match. We have also

taken the opportunity to update the docstring so that we know what types

of parameters should be passed and what they are for.

PYTHON

def generate_positions(ref_ra='00:42:44.3',

ref_dec='41:16:09',

radius=1.,

nsources=1000):

"""

Create nsources random locations within radius of the reference position.

Parameters

----------

ref_ra, ref_dec : str

Reference position in "HH:MM:SS.S"/"DD:MM:SS.S" format.

Default position is Andromeda galaxy.

radius : float

The radius within which to generate positions. Default = 1.

nsources : int

The number of positions to generate

Returns

-------

ra, dec : numpy.array

Arrays of ra and dec coordinates in degrees.

"""

...

returnFor the write_file function, we already had two

parameters that needed to be passed, but now we’ll add another which is

the output file name. This wasn’t part of the global variables, but it

was a hard coded file that we might want to change as we create multiple

catalogs. Note that this function used to use the nsources

global variable to know how many ra/dec values were passed. In this

revised version we just look at the length of the ras list

instead.

PYTHON

def write_file(ras, decs,

outfile='catalog.csv'):

"""

Write the ra/dec catalog to a file, and include a header and IDs.

Parameters

----------

ras, decs : list, numpy.array, or any iterable

Iterable of ra and dec coordinates. The length of these need to match.

outfile : str

Path/filename for the output file. (Overwite=True)

"""

with open(outfile, 'w') as f:

# creat a header row

print("id,ra,dec", file=f)

for i in range(len(ras)):

# use a csv format

print("{0}, {1:7.4f}, {2:7.4f}".format(i, ras[i], decs[i]), file=f)

returnBy moving the global variables into the parameters of the functions, and making use of the default values we don’t need to make any further changes to our code. The following block is unchanged.

One advantage to our changes is that we could now generate a different catalog by adjusting these last two lines like this:

We will leverage this ability in order to make a command line

interface. The recommended way to do this is to use the

argparse module, and an if __name__ clause as

follows.

PYTHON

if __name__ == '__main__':

# Set up the parser with all the options that you want

parser = argparse.ArgumentParser(prog='sim')

group1 = parser.add_argument_group()

group1.add_argument('--ref_ra', dest='ref_ra', type=str, default='00:42:44.3',

help='Central/reference RA position HH:MM:SS.S format')

group1.add_argument('--ref_dec', dest='ref_dec', type=str, default='41:16:09',

help='Central/reference Dec position DD:MM:SS.S format')

group1.add_argument('--radius', dest='radius', type=float, default=1.,

help='radius within which the new positions are generated (deg)')

group1.add_argument('--n', dest='nsources', type=int, default=1_000,

help='Number of positions to generate')

group1.add_argument('--out', dest='outfile', type=str, default='catalog.csv',

help='Filename for saving output (csv format)')

# parse the command line input

options = parser.parse_args()Here we have created a single group of arguments called

group1, and then we add five different arguments to that

group. Note that we provide both a call signature (--out)

for specifying a parameter, as well as the name/type/default value of

that parameter (dest/type/default). The help

is optional but highly recommended. If we were to run the code above

with the --help option then we would get the following

output:

OUTPUT

$> python sim.py --help

usage: sim [-h] [--ref_ra REF_RA] [--ref_dec REF_DEC] [--radius RADIUS] [--n NSOURCES] [--out OUTFILE]

optional arguments:

-h, --help show this help message and exit

--ref_ra REF_RA Central/reference RA position HH:MM:SS.S format

--ref_dec REF_DEC Central/reference Dec position DD:MM:SS.S format

--radius RADIUS radius within which the new positions are generated (deg)

--n NSOURCES Number of positions to generate

--out OUTFILE Filename for saving output (csv format)Right away we have a way for people to understand how to use the program without having to open the source code. This will include you, two days from now, when you forgot some of the details of the code.

Finally, we can connect the user input to the program by using the

options object. Each of the parameters that were read in

with a dest=thing can be accessed using

options.thing. If we specified a type then argparse will

make sure that users don’t give input that can’t be converted to that

type. The final part of our code now looks like this:

PYTHON

# parse the command line input

options = parser.parse_args()

ras, decs = generate_positions(ref_ra=options.ref_ra,

ref_dec=options.ref_dec,

radius=options.radius,

nsources=options.nsources)

write_file(ras, decs, outfile=options.outfile)Since we specified default values for all of the inputs, we can run

python sim.py and it will run with the default values.

Creating a simple testing script

We now have a script with a command line interface that allows people to use the code without having to read the source. At this point we are feeling rather professional and are happy to share the code with others. One of the things that is often scary is the thought of others finding bugs in our code and then having to fix these bugs. A good way to alleviate these fears is to do some testing of the code ourselves to catch all the obvious errors before the script is sent to others. In a later lesson we’ll go through some more formal and rigorous testing, but for now we are going to start simple with some of the most basic tests.

In our file test_sim.sh we will make three tests. The

first two tests we are going to do will just ensure that the code will

not immediately crash when we run it:

BASH

#! /usr/bin/env bash

echo "Testing sim.py"

python sim.py || { echo "FAILED to run with default parameters"; exit 1 ;}

python sim.py --help || { echo "FAILED to print help"; exit 1 ;}The next test we do will ensure that when we pass a filename to the

--out option, we will get a new file with that name. We use

the bash [ ] test and -f conditional to see

that the file exists.

BASH

python sim.py --out test.csv

if [ ! -f "test.csv" ]; then

echo "FAILED to generate ouput test.csv"

exit 1

fi

echo "all tests PASSED"

exit 0The last two lines above then make sure that when the tests all pass we get a positive message and return an exit status of 0.

We now have a test script that will catch the most egregious issues with our script. Any time we make changes to our script we should run the test script just to make sure that we haven’t seriously broken anything.

More advanced testing with bash is not explored here as we will move to a python based test environment in a later lesson. The python based testing will make it easier to do things like make sure the right number of entries exist in the catalogue file and that the positions all lie within the specified region.

Summary

In this first development cycle we have:

- Demonstrated that we can simulate a catalogue of positions on the sky;

- Written a function to do the simulation, and another to write the results to a file;

- Created a command line interface to make the script easy to use and re-use; and

- Made a simple test script to alert us when our changes break the code.

If you would like to see the status of the project at the end of this cycle you can look at the cycle1 branch on GitHub (at this link).

Content from Developing Software In A Team

Last updated on 2026-02-27 | Edit this page

Estimated time: 180 minutes

Overview

Questions

- What are some pro/con of developing in a team?

- What are some common team roles?

- How can GitHub help managing tasks in a group project?

Objectives

- Gain experience developing software in a team environment

- Understand some common project management styles

- Create a new GitHub repo and push our project

- Experience a full development cycle with our test project

Benefits and pit-falls

Working on a software project as part of a team is a very different experience from working on a solo project. There are of course great advantages to having multiple people working on a problem, but there are also some pit-falls that need to be avoided, and overheads that cannot be avoided.

Benefits of working in a team

- Each person has different areas of expertise

- More person-hours available

- Group discussion leads to better decision making

- Group members can learn from each other faster than they can learn from a book/website

- Seeing how others work and solve problems can help you learn new skills that you didn’t know you needed

- Bug-fixing is easier when you have an informed buddy to talk to

- Work can be assigned to people with the most relevant skills increasing efficiency

- Dividing the development and testing of a work item between two people can make for better code and more complete tests

Pit-falls to avoid

- Siloing work:

- Separating work items can be a good idea but there needs to be frequent check-ins to ensure that the software being developed by two people is not diverging or conflicting

- Inconsistent standards:

- Even though people may have personal preferences or styles, documentation, commenting, and testing should be consistent across a code base.

- Non-constructive criticism:

- When things work they just work and no one talks about it

- When things break everyone talks about it

- Given that issues result from negative experiences, it is important for team moral to always try and keep feedback as constructive as possible

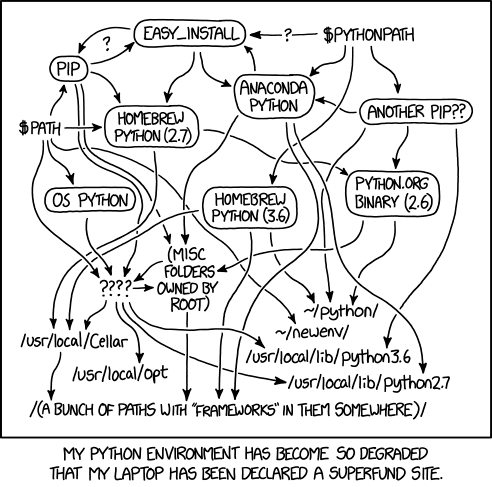

- Platform / dependency conflicts:

- There is no guarantee that all developers will be using the same operating system or development tools.

- Allowing people the freedom to choose is important but the differences need to be managed via an agreement on how/when/where testing takes place, what acceptance looks like, and the use of a style guide.

- Having some files with windows style end of line and others with linux style end of line can make it tedious to track changes

Overheads that cannot (should not) be avoided

Project management will take time, and may not be a skill that you have. Invest time in training. Communication and coordination take time but are vital to success Commit conflicts will arise even if everything is done right, these need to be discussed and handled properly rather than ignored The moral of the team is important to success and will take time and effort to maintain, but it is worth doing so that your project can see the benefits listed above.

Communication and project management

Communication

Team work requires effective communication between team members. This helps to keep everyone up to date on the status of the project, the current and future direction of the work, and avoids duplication of effort. Email, slack, messenger, GitHub issues, chats over a coffee, or a formal weekly meeting, are all valid ways of keeping the teem together. A formal meeting once a month complimented by a weekly email to status check, and real time slack messaging for immediate questions may be a good solution for a 5 person team working on a year long project. An informal weekly chat over lunch with intermittent emails may be good for a 2 person team working on a project that lasts a few months. The most important thing is that the chosen method is effective and that it includes all team members.

If an informal or ephemeral communication medium is involved when coming to an agreement or making a decision, it is good practice to have a follow up communication to reiterate the decision and reasoning using a medium that can be archived. For example, if the team meets over a coffee, discusses a current issue, and decides on a way forward, it is a good idea for someone to follow this up with an email so that the details of the decision are not lost.

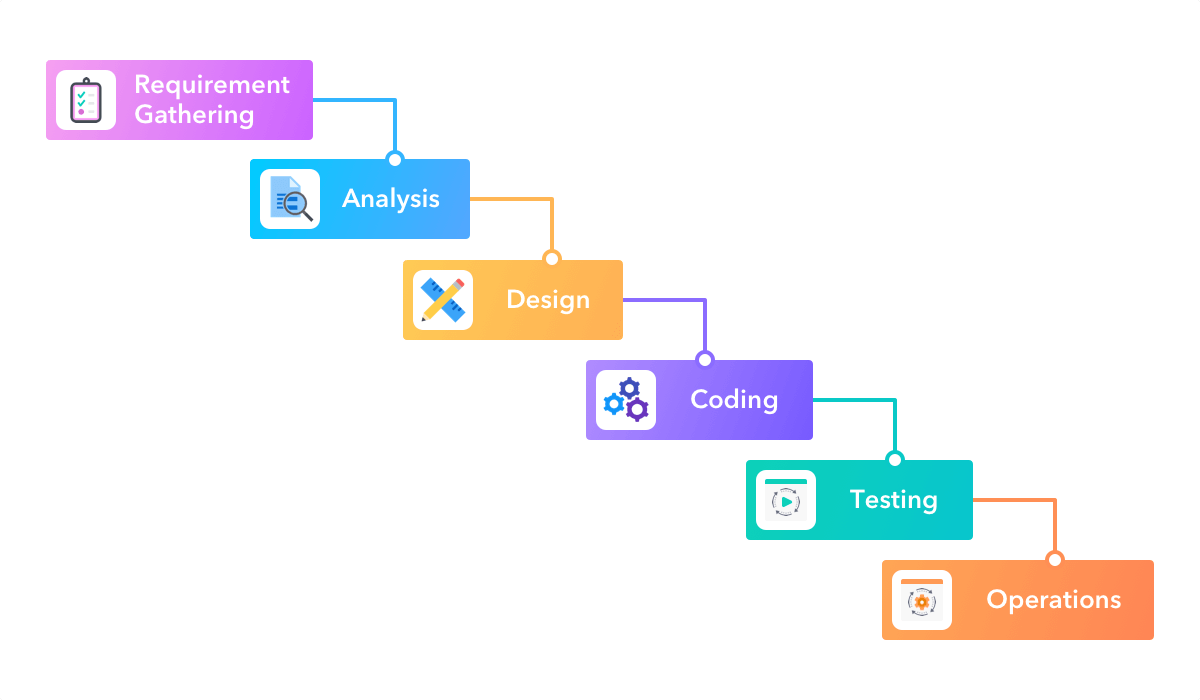

Project management styles

The two main project management styles that are applied to software development are waterfall and agile. Neither of these are a project management methodology but are more like umbrella terms that group a set of methodologies that share a common mindset. In the waterfall mindset there is a very linear approach to the design and execution and delivery of the project with the main focus being on the process. In the agile mindset the main focus is on outcomes and deliverables, with the design and execution and delivery occurring in cycles.

Waterfall

In a waterfall project the whole life cycle of a project is mapped onto distinct, sequential work items, with each item relying on those that occur before, and blocking those that come after. Waterfall project management therefore represents a very rigid and linear approach. The waterfall system is a very traditional method for managing a project with participants being assigned clear roles and expectations.

Image credit: https://startinfinity.com/project-management-methodologies/waterfall

Image credit: https://startinfinity.com/project-management-methodologies/waterfall

The name waterfall comes from the fact that each work item will cascade onto the next. In a waterfall project there is a lot of attention paid to defining the entirety of the project up front, and then a close adherence to the project plan and timeline. It is therefore difficult to incorporate changes into the project plan, and therefore it is hard to respond to setbacks, opportunities, or changes in requirements.

The waterfall project management style was initially designed in the 1970’s for use in software development projects. It was taken on by many other industries to great success, but is now seen as an outdated methodology for software projects.

Agile

In 2001 the Manifesto for Agile Software Development was published. The agile manifesto can be summed up as:

We are uncovering better ways of developing software by doing it and helping others do it. Through this work we have come to value:

Individuals and interactions over processes and tools Working software over comprehensive documentation Customer collaboration over contract negotiation Responding to change over following a plan

That is, while there is value in the items on the right, we value the items on the left more.

The agile manifesto is based on the following principles:

- The highest priority is to satisfy the customer through early and continuous delivery of valuable software.

- Welcome changing requirements, even late in development. Agile processes harness change for the customer’s competitive advantage.

- Deliver working software frequently, from a couple of weeks to a couple of months, with a preference to the shorter timescale.

- Business people and developers must work together daily throughout the project.

- Build projects around motivated individuals. Give them the environment and support they need, and trust them to get the job done.

- The most efficient and effective method of conveying information to and within a development team is face-to-face conversation.

- Working software is the primary measure of progress.

- Agile processes promote sustainable development. The sponsors, developers, and users should be able to maintain a constant pace indefinitely.

- Continuous attention to technical excellence and good design enhances agility.

- Simplicity–the art of maximising the amount of work not done–is essential.

- The best architectures, requirements, and designs emerge from self-organising teams.

- At regular intervals, the team reflects on how to become more effective, then tunes and adjusts its behaviour accordingly.

- The agile mentality is designed to be able to be flexible, respond to change, deliver a working product early and have it improve incrementally over time.

Advantages of the agile mentality include:

- faster deployment of working solutions

- better quality of work

- increased client satisfaction

- reduced risk thanks to frequent testing and deployment

- increased team moral

- a project that finishes early is still able to deliver a working result

Some potential drawbacks include:

- potential for scope creep from continuous client feedback

- workload management can be difficult when duties and requirements are not known far in advance

- short development cycles can mean that skills gaps are not identified early leading to delays in delivery

You will likely see that the Agile development mentality fits much better with research project management including both traditional research work and software development projects. Additionally you may note that your organisation may employ a mix of different management methodologies for different projects, or at different levels of the organisation. Understanding the benefits and drawbacks of each will help you choose a project management style that works best for your project.

Popular Agile methodologies

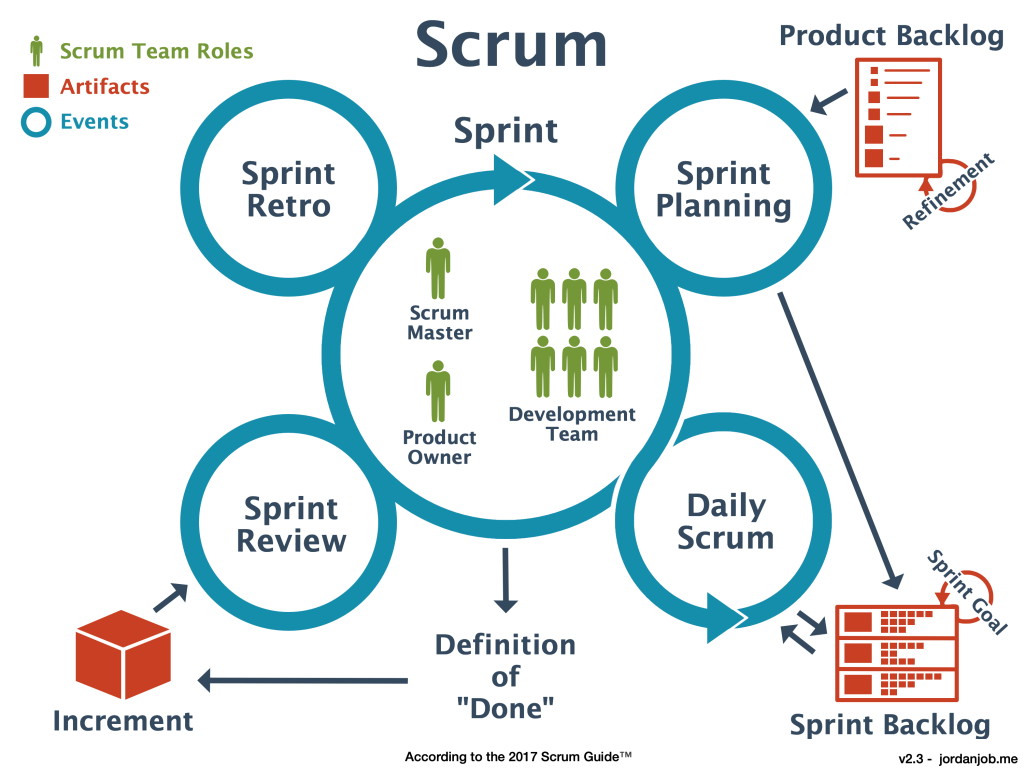

Scrum

Scrum is mainly focused on the idea of sprints. Sprints are where the bulk of the “work” gets done, although there is a significant amount of effort put into the preparation and planning of each sprint, and then the post-sprint review and retrospective. Sprints are typically 1-2 weeks in duration and will focus on a particular set of goals. During a sprint there is usually a daily scrum at the start of the day in which people talk about what they did the day before, what their plans are for today, and what problems they might foresee. The sprint review is about reviewing the work that was completed, reporting related to said work, and identification of incomplete work. The retrospective is a more meta-level reflection on how the sprint went, meant to identify how the team worked together, what organisation or communication worked well or not, and what changes could be made to make the next sprint more productive.

Image credit: https://jordanjob.me/blog/scrum-diagram/

Image credit: https://jordanjob.me/blog/scrum-diagram/

The scrum methodology is based on team work and has well defined roles. The benefits of scrum include the ability to react to changes in requirements or resourcing, continuous testing/integration is effectively built in, prioritisation of tasks can be adjusted throughout the process. The disadvantages of scrum are that you need very good organisation before each sprint to maximise productivity. Additionally it requires that team members are able to block out a 1-2 week period in which they focus solely on the sprint and maintain a high focus. Clearly, scrum requires a medium to large team (5+) in order to be effective.

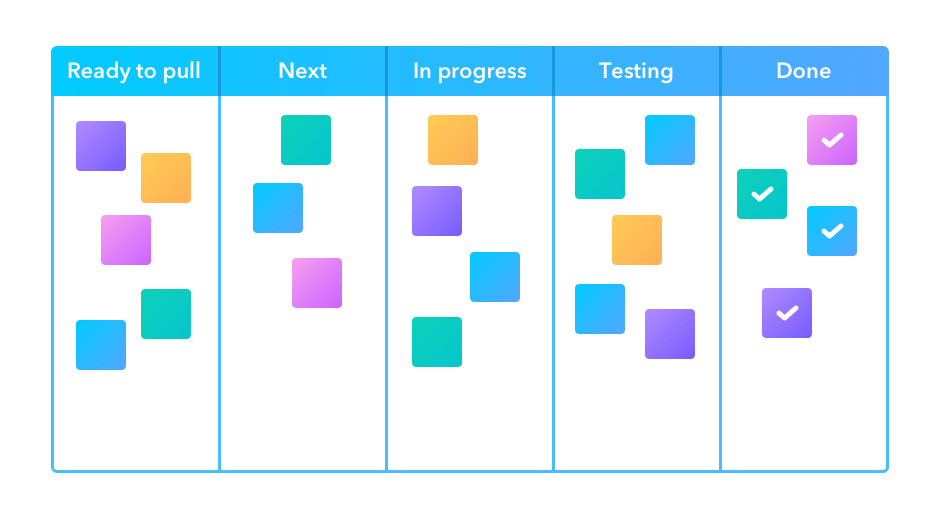

Kanban

In the Kanban methodology the main focus is on the visualisation that is used during the development process – the Kanban board. One goal of the board is to identify potential bottlenecks in the process. An example board is shown below with the following features:

Tasks that are represented as squares. Sticky notes or cards are often used on a physical wall, or their digital representation online.

Columns that represent the state of each task. A task is expected to migrate from the left most column (Ready/Backlog) through the central columns and into the final (Done/Complete) column, as the related work is being done.

Work in progress limits. In order to maintain focus and productivity, there are often limits on the number of cards that can be placed into some of the columns. In particular the “in progress” and “testing” columns are limited by the available people time allocated to the project.

Swim lanes (not shown), are rows that separate tasks based on categories of work such as documentation, testing, or reporting. Alternatively, colour coding of cards can be used to identify these categories.

Image

credit: https://startinfinity.com/project-management-methodologies/kanban

Image

credit: https://startinfinity.com/project-management-methodologies/kanban

Even when the Kanban methodology is not being explicitly used, the visualisation process is so powerful that it often used in many other project management strategies. It’s not uncommon to see a Kanban board on the wall during a two week sprint.

The advantage of Kanban is that you have a single place to track the progress of all the tasks, and bottle necks can be quickly identified. One of the main disadvantages of Kanban come from people failing to update the Kanban board as a task is being worked on (poor communication), or updating too often (high overhead). Many project management software tools incorporate a Kanban board and tools like JIRA even have a way of migrating and updating tasks based on activity in a linked GitHub repository so that people can keep the Kanban up to date without having to visit the board itself.

Summary

Communication and effective project management are critical to the success of any collaborative project. There is no one-size-fits-all solution, and you will need to consider the project requirements, and the size and expertise of your team when deciding on a communication and management strategy. Thankfully there are many project management tools online that are free that will support whatever choice you make.

Member roles

Depending on the scope of your project, the size of the team, and the management strategy that is being used, you will have a number of roles that need to be filled within the team. Below is a list of some common roles that may be applicable to your project along with a short description. It should be noted that not all roles are required for all projects, and that the roles do not need to map to people on a 1:1 basis. It is normal to have multiple people per role and to have some people acting in multiple roles. The most important thing is that you consider the different roles and responsibilities, and have at least an implicit agreement on who is going to be filling each of the roles. This will help people to understand their responsibilities and who they should be handing work over to or working with during the project development.

Roles

- Software Developer

- Primarily focused on writing the software, fixing bugs, implementing new features.

- Should be responsive to issues, and provide implementation advice to the team.

- Documentation and Test Developer

- Primarily focused on the creation of documentation and developing tests.

- Test cases and docstrings should be consistent.

- Typically also will be responsible for automating the testing process and reporting issues.

- Documentation can be focused on docstrings but can also include online documentation, help files, tutorials, and examples.

- Product Owner

- The person who takes responsibility for setting the software project goals.

- This person is tasked with understanding the client/user needs and translating them into user stories, milestones, and tasks.

- Domain Expert

- Someone who has a good understanding of the context in which the software will be deployed or used.

- When developing software for astronomers, it is not essential for all the development team to have a good understanding of the general or specific field of application.

- A domain expert can be an internal point of contact for the development team when they have questions about the correctness or utility of an aspect of theproject.

- Project Manager

- Primarily focused on the organisation of the team, ensuring that team members have tasks matched to their skills, and that the required skills are available within the team.

- The project manager will be responsible for the timing and scheduling of work and deadlines.

- Reviewer

- Any piece of work that is completed should be assigned to a reviewer to ensure that the work is up to standard.

- The reviewer should have a good understanding of the goals of a piece of work and be able to give feedback on areas that need improvement.

- The reviewer should ideally not be involved in the development of the piece of work they are reviewing.

- Approver

- Similar to a reviewer, except that there is no requirement for an approver to understand the implementation of the work being done.

- An approver is focused on ensuring that the proper procedures have been followed.

- User Acceptance Tester

- Someone who is able to perform the necessary tests to ensure that each of the user stories have been met.

- Acceptance testing is typically not able to be automated and will require manual interaction to ensure that the end user can perform the tasks outline in the user stories.

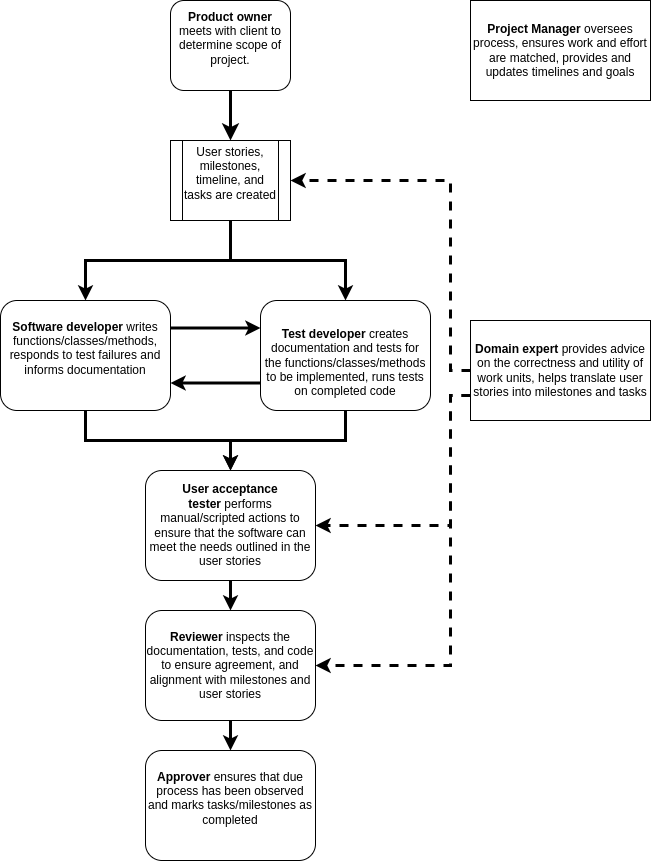

The following workflow describes the interaction of the above roles within a generic software project:

Solid lines indicate the flow of content for action, approval, or feedback. Dashed lines indicate input in the form of advice or oversight.

GROUP Activity: Who does what and when?

Within your software project group review the past three lessons and discuss the following:

- What software development roles are required for your project?

- What project management roles are required for your project?

- Are there any roles that would be beneficial to your project that are not listed?

- How are these roles distributed among the team?

- Are there people with multiple roles or roles shared among multiple people?

- Who determines the timeline and deliverables for this project?

- Is the timeline flexible?

- Can you classify the deliverables as one of required/desired/optional?

- How often and in what format should communication be managed?

If you have been involved in a previous software development project, please share the following with your teammates:

- What practices worked well and could be brought into this and future projects?

- What practices didn’t work well and should be avoided in the future?

- Was the previous project larger or smaller than the current one and how would that affect your choice of management/communication?

Create project standards and expectations

When a single person is working on a project there will typically be

a consistency imposed simply by the fact that the developer has ‘their

way’ of doing things (though this consistency may not exist through

time). When multiple developers are working on a project it is good

practice to have an agreed up on set of standards that will be followed

to ensure that the project has a consistent style and that common

practices are followed. These practices can include how/when to test,

the branch/develop/merge cycle, documentation formats, as well as code

style. A common place to note these standards and expectations is in the

CONTRIBUTING.md file in the root of your software project.

This file should be considered to be supplementary to the

README.md file, and for a slightly different audience.

Whilst the README.md file is aimed at users of the software, the

CONTRIBUTING.md file is aimed at people who might develop

the software, give feedback, or submit bug/feature requests.

Sections to consider for CONTRIBUTING.md

- Welcome and encourage people to contribute to the project

- Table of Contents (especially if the file is long)

- Style guide

- List standards for code style

- Consider using a linter and listing it here (with settings)

- Note the docstring format and guidelines

- Testing

- Where the tests are located

- How the test are run

- The machine/environment on which the tests are expected to pass

- How to submit changes

- Who can submit changes

- Expectations for what changes will be accepted

- The pull request approval process

- How to report a bug

- What is expected for a good bug report

- What tags/categories should be used when submitting a report

- How to request an “enhancement”

- What enhancements are likely to be support and which are not

- Templates

- Examples for enhancement / bug report / change requests.

- Code of Conduct

- Expectations for behaviour when contributing to the project

- Consequences for breaching the code of conduct

- Email/web address for reporting breaches

- Recognition model

- Let people know how their contributions will be recognised.

- The following may be appropriate:

- An acknowledgement section on the wiki

- Co-authorship or acknowledgements in a published paper

- Invitation to join as github contributor

- A shout out on social media

- A beverage of choice

The CONTRIBUTING.md file can become quite long if all of

the above are included. The key is to have a record of how people should

interact with others and with the project and that the project

maintainers adhere to these guide lines.

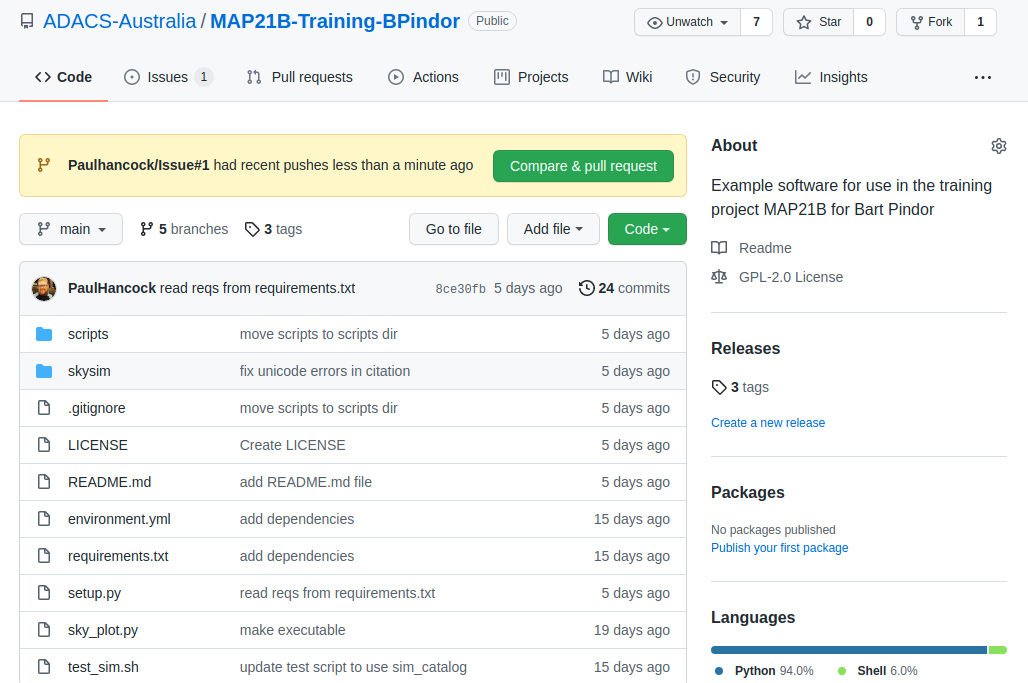

Creating a GitHub repository

At the moment we have a bunch of code, documentation, and associated files. We wish to make these available to others in the easiest way possible. We could just zip them all up and email to collaborators. However this means that collaborators no longer benefit from any future development that you do, and even worse, will start asking questions about code that may have diverged from the version you are working on. A solution to this problem is to keep all your code under version control, and to make use of one of the many free, online repositories to host a copy of the code. For this lesson we’ll focus on GitHub, but gitlab and bitbucket are also good alternatives.

Create an account on Github

Github accounts are free, you just need an email address to sign up. Since some academic institutions shut off your email address promptly when your contract ends, it may be a good idea to use a non-institutional or personal email to sign up to github or other services that will outlast your current contract.

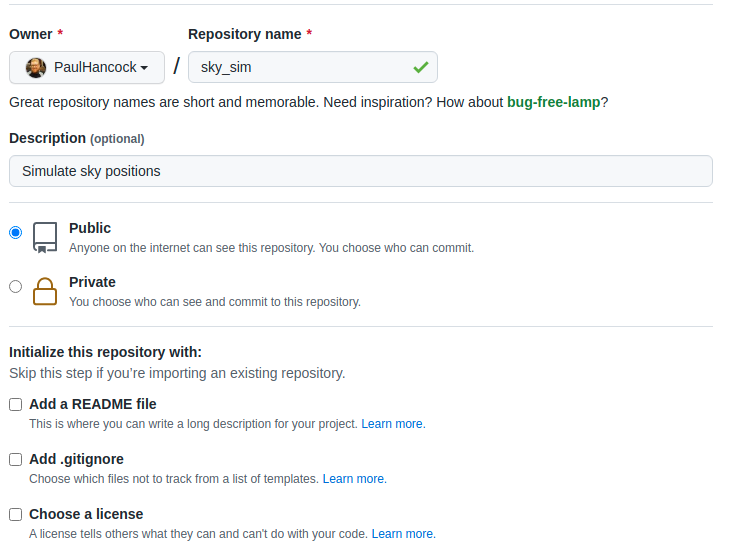

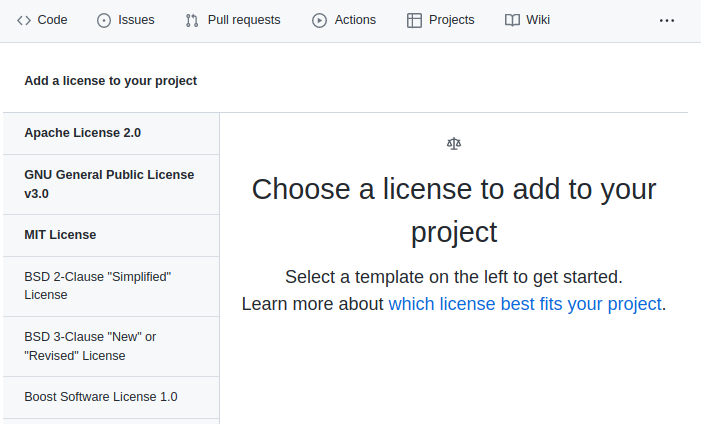

Create a new repository on Github

Go to Github and sign in. You should be taken to a page which has a listing of your repositories and a green button to create a new one. Click the button and you should see a screen similar to the following.

For now, lets create a truly empty repository so don’t select any of the last three boxes. Just give the repo a name (your project name is a good choice if available). You can change the description later or fill it in now.

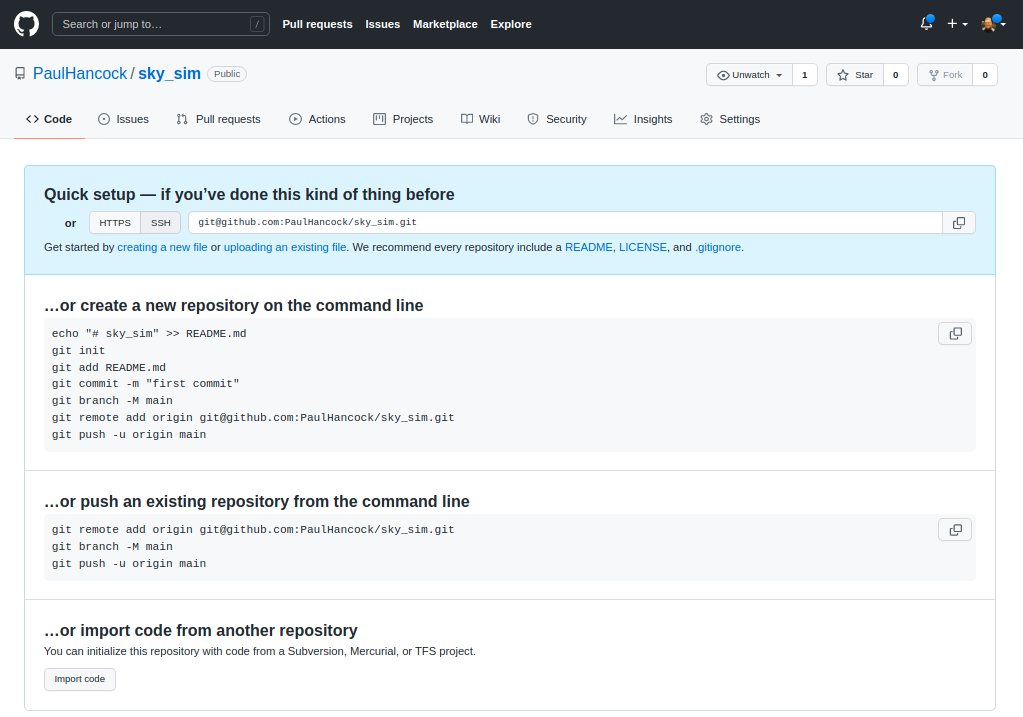

Once you create the repository you’ll see a set of instructions about what to do next.

Now we are going to go with “create a new repository from the command line”.

The first part is to make a new local git repository using init / add / commit and to name the branch to be main (instead of master).

BASH

git init

git add README.md

git add requirements.txt encironment.yml

git add sim_catalog skysim/*.py

git commit -m "first commit"

git branch -M mainThe next step is to link your local repository with the one that you

just created on Github. Replace PaulHancock/sky_sim.git

with your username and the name of the repo that you chose.

After you have done the git push you’ll have added all

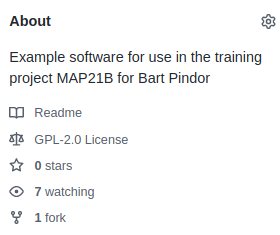

your local changes to the remote repository and you can view the new

state of the project on Github. Note how your README.md

file gets rendered into a nice splash page.

When you push to origin main you’ll have to authenticate

with Github, see the github

documentation for instructions on how to set that up for ssh.

If you set your repository to be public then anyone on the internet

can see and download your code, they can make a fork (copy) of it to

work on themselves, and can even send feature requests or bug reports

via the issue tracker. We will get into those features later, but for

now all you need to do is let your collaborators know that your code is

available on Github, send them the link, and then they can

download/clone it as needed. An added bonus is that as you make changes

to your code, and then add/commit/push these changes, your

collaborators can then pull those changes and get the updates without

having to bug you about it.

Issue tracking on GitHub

Once your code is in the wild hopefully people will find it, use it, and give feedback. Positive feedback in person or by email is always nice, however there is also the issue of bugs, new feature requests, and people wanting clarification. Dealing with these last three points requires some organisation, and so we will learn how to use an issue tracker for this.

Github, gitlab, and bitbucket all offer an form of issue tracking that is attached to each of your software repositories. In this lesson we’ll focus on the Github issue tracker, but the lessons learned here are applicable to any issue tracker system.

The issue tracker is a way of engaging with your co-developers and

end-users to discuss any problems that people may be having when using

the software. The issue tracker is available for all Github

repositories, and enabled by default. However, publishing code on Github

does not mean that you are obligated to provide any support at all. If

you don’t intend on providing support for your software, it would be a

good idea to mention this in the README.md file that is

shown on the landing page so that people have clear expectations. If you

do intend to provide support and receive feedback then the issue tracker

is for you.

Overview

We will cover four of the most common issues that you are likely to see or use on the issue tracker, and give some guidance and advice about how to approach them. We’ll cover general questions, bug reports, feature requests, and pull requests.

Github issues has become a full featured work planning and project management system (see link), most of which is beyond the scope of this course. We will be focusing on the basic capabilities of the issue tracker to get you and your group started. Once you are up and running you should explore the other features.

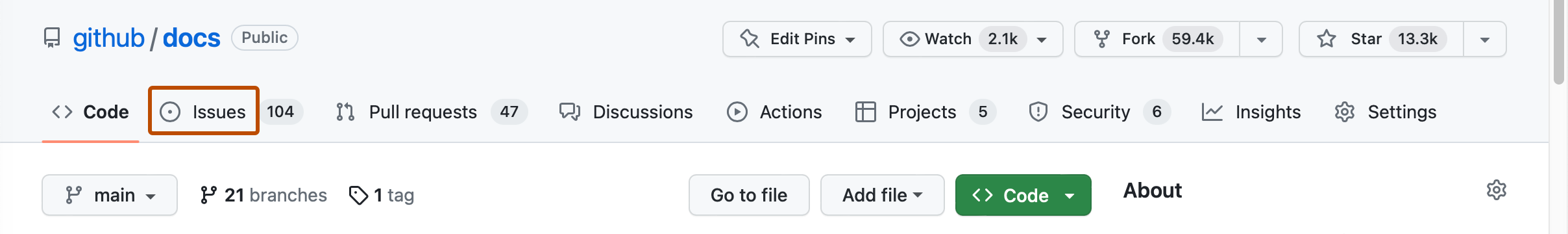

To begin, let’s navigate to the github repository for our project of

choice. On the front page you should see a set of tabs. By default

you’ll be seeing the <> code tab, but we want to

select the ⊙ Issues tab.

Initially this will be blank for your project because there are no issues (yay).

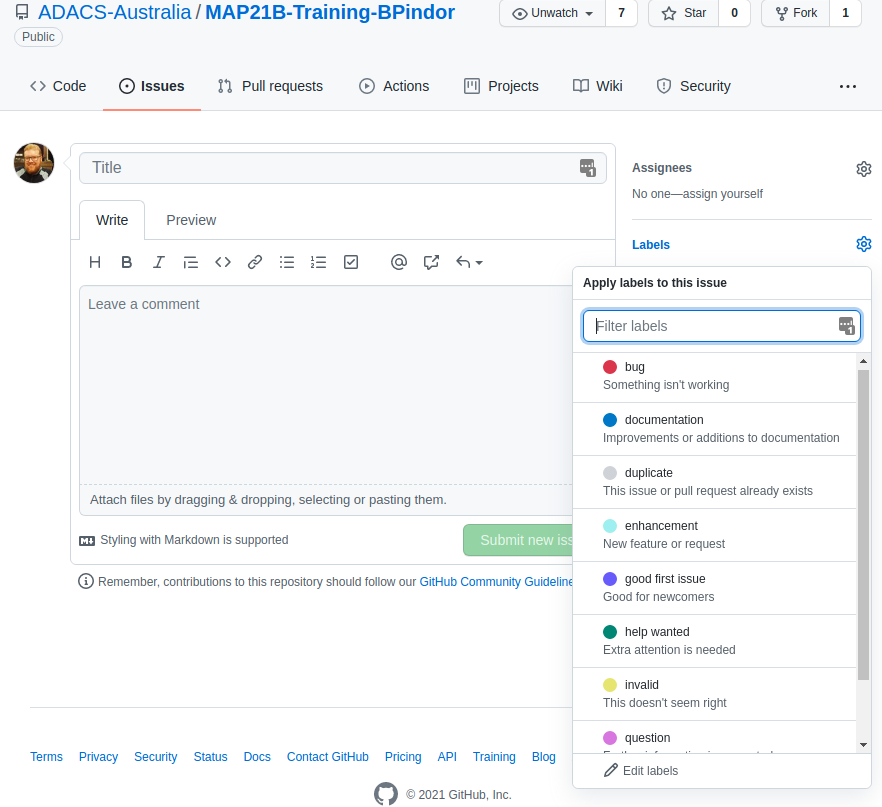

Creating an issue

Navigate to the Issues tab of a repository on Github and

you’ll see a “New issue” button in green. Press this and we’ll explore

some of the options.

An issue has a title (or short description) and a comment (long description). When creating an issue you can add some labels to it so that others can easily understand what kind of issue you are reporting. Github has a range of built in labels, and the repository owner/admins can create more if needed.

Once an issue has been created, the author or repository owner/admins

can adjust the issue by assigning people to work on it, updating the

labels. This is a helpful piece of organisational work. Others can can

also comment on the issue so that there is a back and forth between the

developers, the person reporting the issue, and anyone else experiencing

the same/similar issues. When an issue is resolved it can be marked as

closed. Closed issues are not shown by default, but can be seen by

removing the is:open or add the is:closed

filter.

We will now go through some of the different types of issues that are typically reported and in the next lesson we’ll have a go at creating/resolving these issues.

Questions

This tag is usually used by people asking for clarification. There is not necessarily any issue with the code, it is just that the user doesn’t understand something. The threaded structure of the issue tracker makes this a useful place to have a discussion about the question. Questions usually lead to additional documentation being created – for example an FAQ section on the wiki for the repository. Questions can also be escalated into bugs or feature requests.

If someone sends you a question via email that does not involve personal/private information, then it is often a good idea to ask them to post the question on the issue tracker and then discuss it there. If the person isn’t that interested in using Github then I often just ask for their permission to replicate the email discussion on the issue tracker (with/without naming them depending on their preference). The mentality is the same with people asking questions in a classroom – if one person has a question, often there are others with the same question who are too shy to ask. By answering the question publicly you reduce the number of duplicate email chains that you have.

Bug reports

Bug reports are for when people find an error in your code. The

common errors are code crashing, or code giving unexpected or wrong

output. Best practice for reporting a bug is to give as much information

as is required to reproduce the error. This is the minimum working

example (MWE), or rather the minimum example that produces the error. It

is at this point where code versions and environments can be super

helpful. Asking people to run mycode --version and paste

the output in the bug report can help a lot. Sometimes people work with

old versions of code and simply updating to a new version can fix the

issue. For a program crash, a copy/paste of the output/error is

useful.

Github allows you to add files and images to the issue tracker so that people have the option of supplying a small amount of real data to reproduce the error. Similarly they can screenshot a problem (particularly useful for graphic interfaces or code that produces plots).

Keep in mind that when someone posts a bug report it is because they are having a problem. That problem is real. It may not be due to your code. It may be due to them misusing or misunderstanding your code. It may be due to issues with code that you are dependent on. It may be your fault. Just remember that people are not looking to place blame, but are looking to find a solution. Have a conversation with them in the issue tracker to figure out what is going on and how you could help solve the problem. If you don’t consider the bug to be a problem (it’s a feature not a bug) then note this in the issue tracker.

Feature requests

Sometimes a user will have an idea about how to improve or expand the capability of the software they are using. A feature request is a way for the user to suggest these improvements. Feature requests are not an indication that something is wrong, but that there is an opportunity to be better. Some example feature requests are:

- support additional input/output formats, or

- support additional operating systems, or

- provide some sanity checking before users made silly mistakes, or

- improve a users quality of life by combining multiple often used functions into one, or

- provide documentation in an alternative format (html, pdf, online wiki etc).

Feature requests are typically a user’s wish list, which if fulfilled, will save the user time or allow them to expand the scope of their work.

Pull requests

For a collaborative software development project you’ll typically

have multiple people contributing code to a range of branches. When the

development of the branch is complete the developer will submit a pull

request to have their changes/updates incorporated into a reference

branch (usually dev or main). A pull request is essentially a moderated

git merge (or git rebase) that allows you to

see any conflicts, see/discuss/approve changes, and make any final

changes required before the merge actually takes place.

People have the option of forking (copying) your public repository and making their own changes. If you are lucky, people will make useful changes to your code and then offer these changes back to you via a pull request. If these changes are aligned with the goals of your project and meet the various style and testing conditions that you set, then the pull request should be accepted.

A pull request is a request. There is no necessity for all pull requests to be accepted, however it is good practice (and polite) to give feedback on any pull requests that are not going to be accepted.

If you would like to capture the style, testing, and documentation

expectations for your project then a file called

CONTRIBUTING.md in the root of the repository is a common

place to define this. You can ask that people making pull requests obey

these expectations, and it is possible to create automated ways of

ensuring these standards are obeyed.

Summary

Whether your development group is just you, or three, or ten people, the issue tracker is a free and convenient workflow management platform.

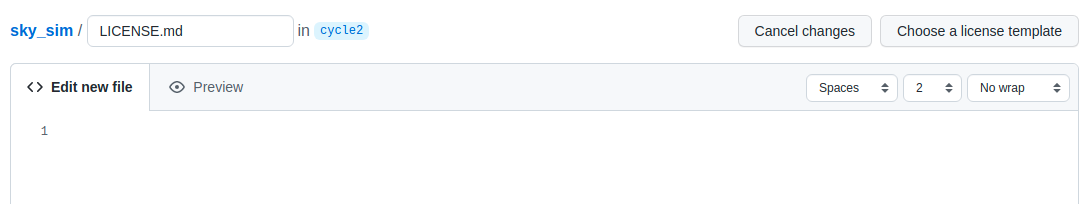

GROUP Activity: Create, discuss, and resolve an issue on GitHub

Given the previous lessons, you should consider creating or updating

the CONTRIBUTING.md file for your project.

- Have one member of your team create a new issue in the GitHub issue tracker to create/update the file and assign the issue to at least one other member of the team.

- Tag the issue with a tag such as

documentation, or create a new tag that is more relevant. - Within your group, use the issue tracker to discuss what sections

are required for the

CONTRIBUTING.mdfile. - Once there is a consensus on the content, have someone make the required changes and push the file to GitHub (or edit directly online using the GitHub editor).

- Try using the

#<IssueNumber>format within the git commit to link the commit to the issue discussion. - When an acceptable file has been created/modified mark the issue as resolved (closed).

Branching and development

In this lesson we’ll focus on one of the most popular git workflows: Feature Branching. Many other workflows exist, but the most important feature of any workflow is that it provides benefit to the project. See the Atlassian tutorials on workflows for more information.

Feature branching

At the core of the feature branching workflow is the idea that all development should be done in a branch separate from the main branch. The rational for this is to ensure that the main branch of the project is always in a not-broken state. When people find your software repository and want to try it out, they will most likely check out the main branch and start their evaluation or usage journey from there. Having a broken main branch is a good way to turn people away from your software, and generate a lot of bug reports.

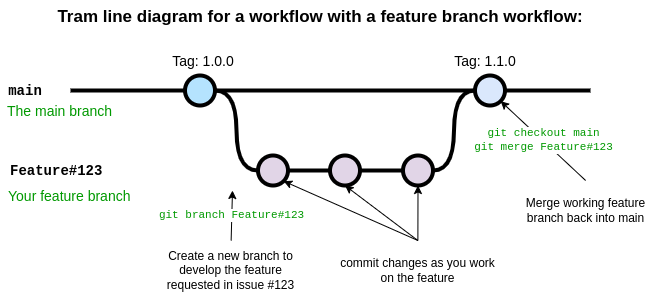

The diagram below shows the basic feature branch workflow.

In the above case a feature has been requested in the issue with

ticket number 123. A developer is assigned the task of developing this

feature and begins by creating a new branch with git branch

using a branch name appropriate for the task. The development proceeds

on the feature branch, occasionally breaking the code, fixing the bugs,

updating tests and documentation, until finally a new version of the

code has been created which implements the new feature. At this point

the developer responsible for this branch ensures all their changes are

pushed to Github and the opens a pull request. During the pull request

other developers, and maybe the person who submitted the initial feature

request, will review and discuss the changes, ensure that the code meets

the repository standards for style and quality. Once everyone is happy

with the changes in the feature branch it is merged into main by

accepting the pull request on Github. Once the feature branch has been

merged into main it is deleted.

In this scheme many features branches can be created, developed, and then deleted over the life-cycle of the project.

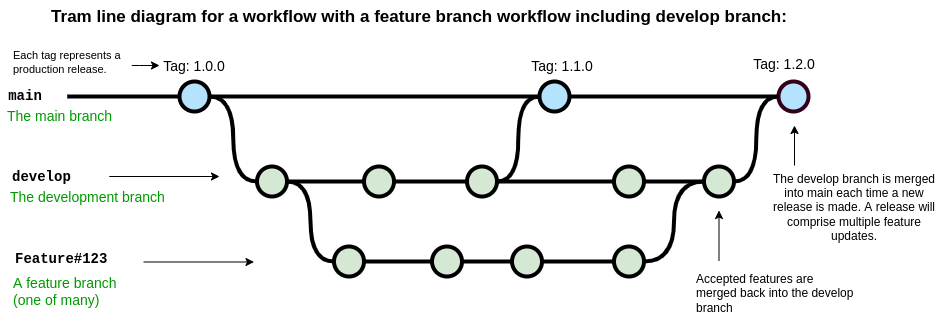

A common variation on the feature branch workflow is to include a development branch as an intermediary between the main and feature branches. Feature branches are created off the develop branch and then merged back when complete. The develop branch therefore contains all of the latest features and if new features interact with each other in unexpected ways, this can be discovered on the develop branch rather than the main branch. The main branch is used for tagging and releasing new versions of the software, and these new versions can each include a number of developments.

Setting up branches

Git does not see any branch as being special. We assign “specialness”

to a branch based on the name, but we can rename branches or change our

idea of special as we like. Regardless of how a git repository has been

set up, you can move from one branching scheme to another at any time.

Creating a new develop branch and then making a habit of

branching features from that instead of main can be done at

any time. The key thing is to make sure that the branching/development

workflow that you decide on is serving a purpose for your project. Early

on in the development of some software you may have a single developer

who is hashing out a proof of concept. In this case you may do all your

development right on the main branch. As you start to share your code

with others you may decide to move development into the develop branch,

and merge back to main only when the code-base is in a working state.

Finally, as you bring more developers into the project you may decide

that a feature-develop-main workflow is a better way to keep the various

developments from interfering with each other.

The point is that you should make a choice, write it down some place

(CONTRIBUTING.md), stick to that choice for as long as it

is useful, and revise it when needed.

An example development cycle for fixing a bug

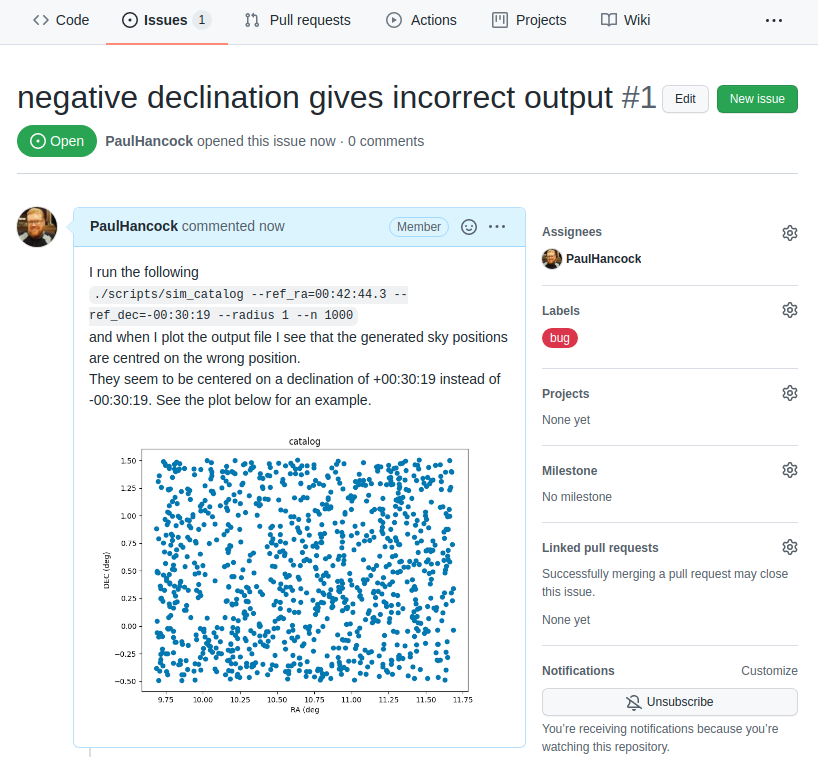

As I’m using my own code for various tasks I notice that

./scripts/sim_catalog --ref_ra=00:42:44.3 --ref_dec=-00:30:19 --radius 1 --n 1000

produces some unexpected output. A plot of the sky locations is shown

below. Note that the points have been generated around a central

declination of 00:30:19 instead of -00:30:19.

It seems that there is an issue with a negative reference

declination.

Reporting the issue

As a user, once I have identified an issue I should go to github and make a bug report on the issue tracker. In the example project I’m the only on the developer team so I’ll assign myself to the issue. I’ll also label it as being a bug.

Confirming the issue

Now that the issue has been submitted I’ll tackle this problem from the developer point of view.

The first thing to do is read and understand the issue. In this case I’ll just run the script exactly as shown in the issue tracker, and see that I get the same problem.

Create a feature branch for this issue

At this point I should create a new branch in my repository with some relevant name. Since the names of issues are not guaranteed to be unique I will instead use the issue number (#1 in this case) as part of the branch name. For a project with multiple developers it is also a good idea to identify who is the ‘owner’ of each branch. An example branch choice would be:

git branch Paulhancock/Issue#1

After some trial and error I find that the cause of the bug is in the following code:

PYTHON

def generate_positions(ref_ra='00:42:44.3',

ref_dec='41:16:09',

radius=1.,

nsources=1000):

...

# convert DMS -> degrees

d, m, s = ref_dec.split(':')

dec = int(d)+int(m)/60+float(s)/3600

...

return ras, decsThe first thing that I do is to make a new test that will expose this bug.

Writing a test

We will now write our first formal test for our code. We’ll use a

format that will make future automated testing easier. All the tests

that we wish to run are python scripts, and we’ll place them in the

tests/ directory. Each script will test a different

sub-module, and for the sim sub-module of our

skysim module, we’ll collect all the tests into

tests/test_sim.py.

The first thing that we need to do is import the module/code that

will be tested. In this case that will be the

generate_positions function within the

skysim.sim module.

PYTHON

#! /usr/bin/env python3

"""

Tests for the skysim.sim module

"""

import numpy as np

from skysim.sim import generate_positionsEach test that we write will be contained within a separate function

whose name begins with test_, and which returns

None when the test passes, and raises an

AssertionError if the test fails. While we could home-brew

our own set of standards for what pass/fail looks like, we will instead

use standards set out by one of the common python testing frameworks

called pytest.

We craft a piece of code that will detect the mistake in our original

function. In this case the mistake is that the negative sign at the

start of the declination is being ignored so we get the wrong positions.

To test for this we’ll run generate_positions with a

declination that is negative, and small radius, so that the expected

output should consist entirely of negative declinations if the function

works properly, and probably all positive declinations if it’s

broken.

Our test function looks like this:

PYTHON

def test_negative_dec():

"""

Test for the negative dec bug noted in issue #1

"""

_, decs = generate_positions(ref_ra='00:00:00',

ref_dec='-00:30:19',

radius=0.1, nsources=10)

if not np.all(decs < 0):

raise AssertionError("Declinations should be <0, but are >0")

returnIn order to run the tests we can add the following snippet to the end

of our script. The snippet essentially looks at all the global variables

(including function names), selects those that start with

test_, assumes that they are a function and calls that

function. When the function is called there is a try/except for an

AssertionError which reports failure if it’s caught, or

reports success if no error was raised.

PYTHON

if __name__ == "__main__":

# introspect and run all the functions starting with 'test'

for f in dir():

if f.startswith('test'):

try:

globals()[f]()

except AssertionError as e:

print("{0} FAILED with error: {1}".format(f, e))

else:

print("{0} PASSED".format(f))When we run our test code we get the following result:

BASH

$ python tests/test_sim.py

test_negative_dec FAILED with error Declinations should be <0, but are >0This failure is not a bad thing, it means that we have successfully written a test function that will identify the bug. Now we can begin the process of fixing the bug.

Fixing the bug

Finally, once I have the test code in place, it’s time to fix the bug. I make some modifications to account for the leading minus sign on the declination as follows:

PYTHON

# convert DMS -> degrees

d, m, s = ref_dec.split(':')

sign = 1

if d[0] == '-':

sign = -1

dec = sign*(abs(int(d))+int(m)/60+float(s)/3600)And I then re-run the code to make sure that the bug has been resolved, and then run my tests:

As I develop more and more tests the list of functions run will grow. Once the new bug has been solved I will re-run all my tests to ensure that fixing this bug has not caused a new bug some other place.

Checking in my work

I now check in my new test code, and updated version of sim.py:

BASH

git add tests/test_sim.py

git commit -m 'expose bug from issue#1'

git commit -m 'resolve #1' skysim/sim.pyNote that I have used #1 to refer to the issue from

within my commit message. When viewed on Github these commit messages

will automatically generate a link to the issue, and when viewing the

issue I should be able to see the reverse link.

I now push the bug fix (and my new branch) to the Github repo.

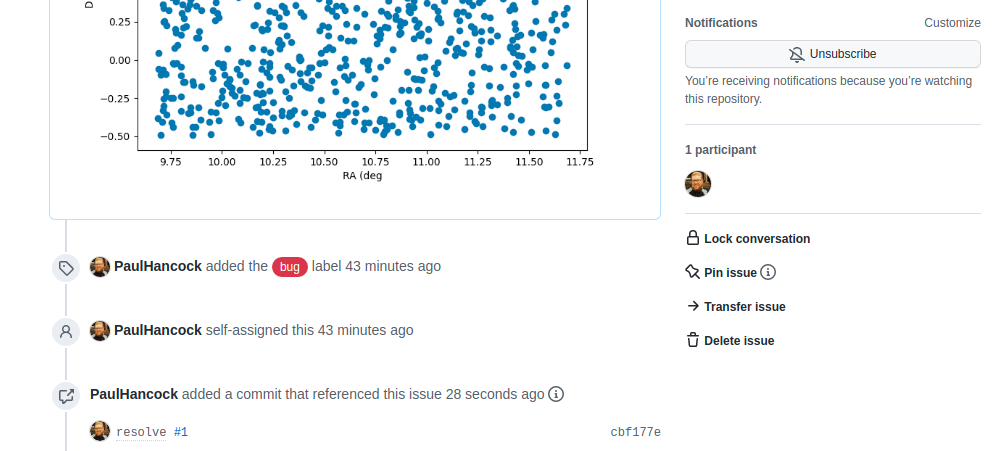

If we look on the original issue page, we can see the link to the commit.

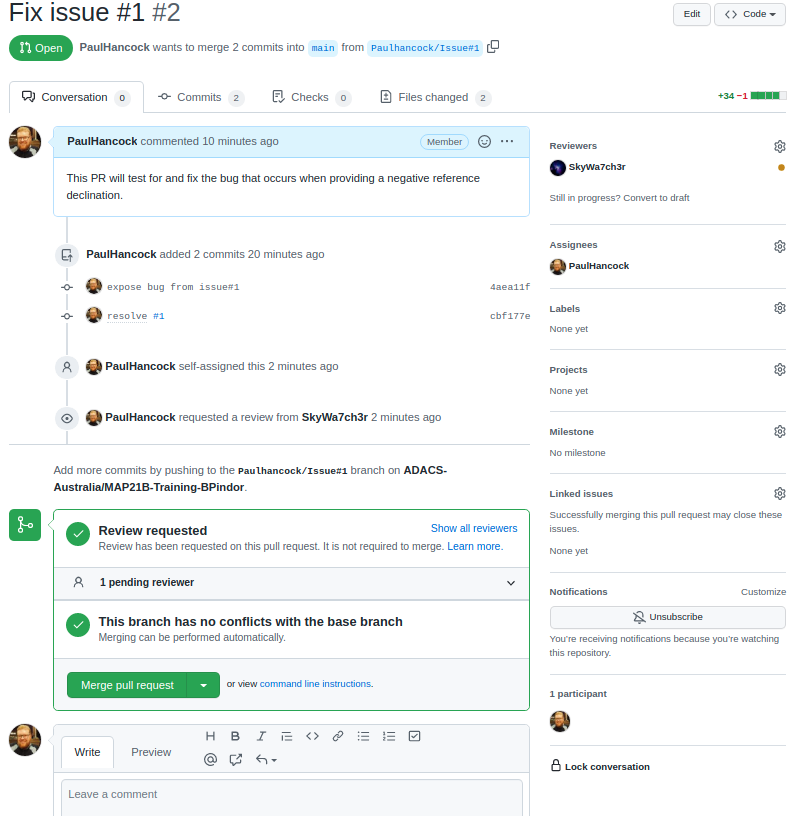

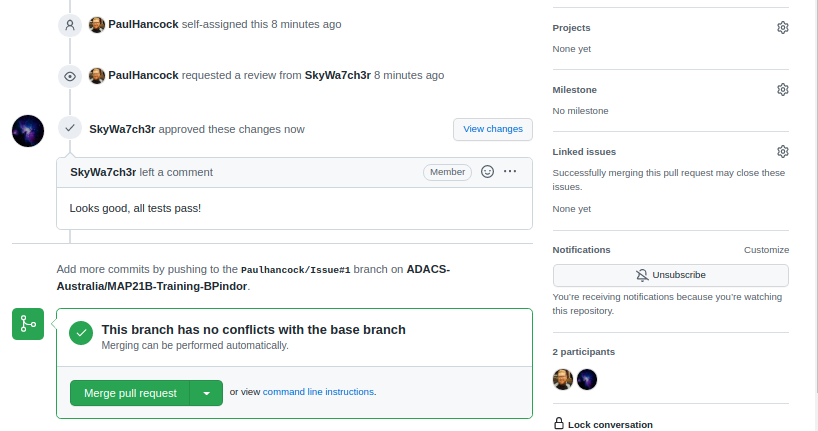

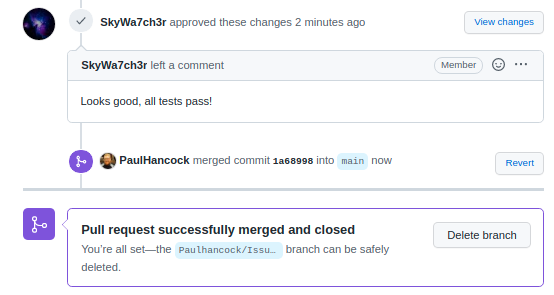

Creating a pull request

When we navigate to the landing page for our repository we will see a new yellow banner appear as below:

We can click the green “Compare & pull request” button to start a new pull request. Alternatively we can go to the “Pull requests” tab. Either way we enter a title and description for the pull request.

Note that the assign/label/project/milestone options that we see on

the pull request form are mostly the same as on the Issues form. This is

because pull requests are just special types of issues. They share a

numbering scheme. This is the first pull request for this repository but

it will be labelled #2 because there is an existing issue

#1. One difference between a pull request and an issue is

that a pull request can have a reviewer assigned to it. Here I have

selected myself as the assignee (the person looking after the pull

request), and SkyWa7ch3r as the reviewer (the person who

will review my code and sign off when they are happy).

Github does some work in the background to let me know that there

will be no conflicts between this branch and the main branch, so that it

is ‘safe’ to do the merge. Currently there is no indication that the

code works or passes our tests. For now we let the reviwer do this work.

The reviewer would pull the Paulhancock/Issue#1 branch, run

the tests and see that they pass, then come back to github and make a

note of it in the discussion. (In a later lesson we’ll see how we can

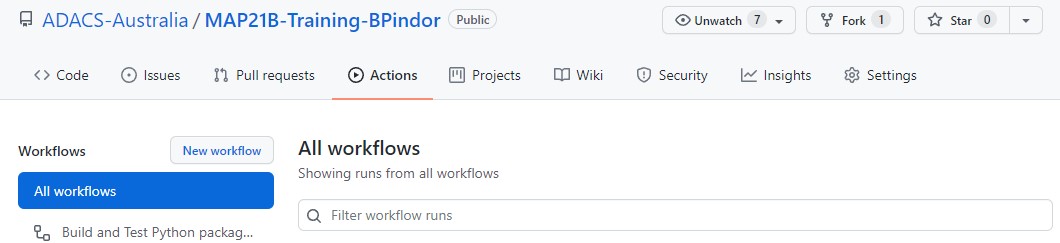

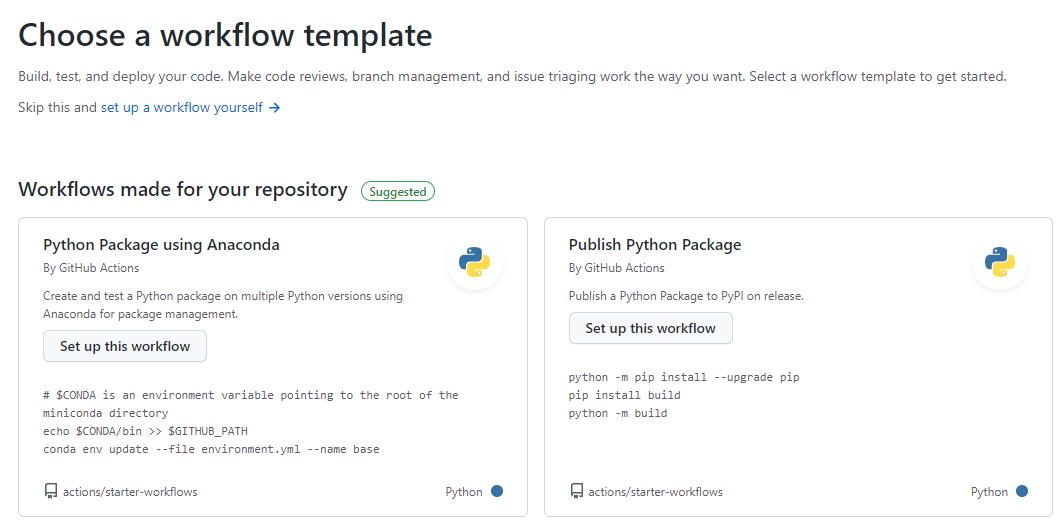

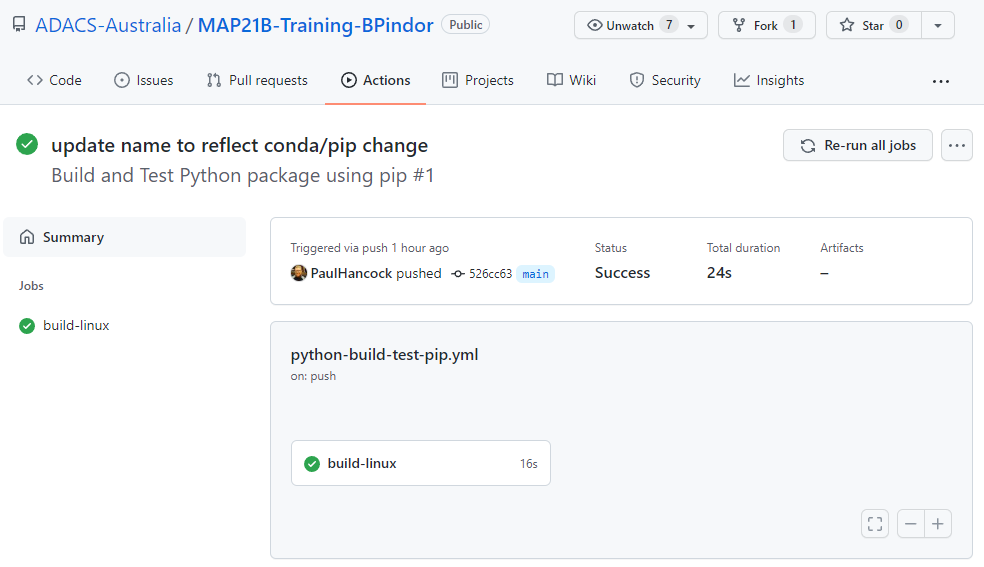

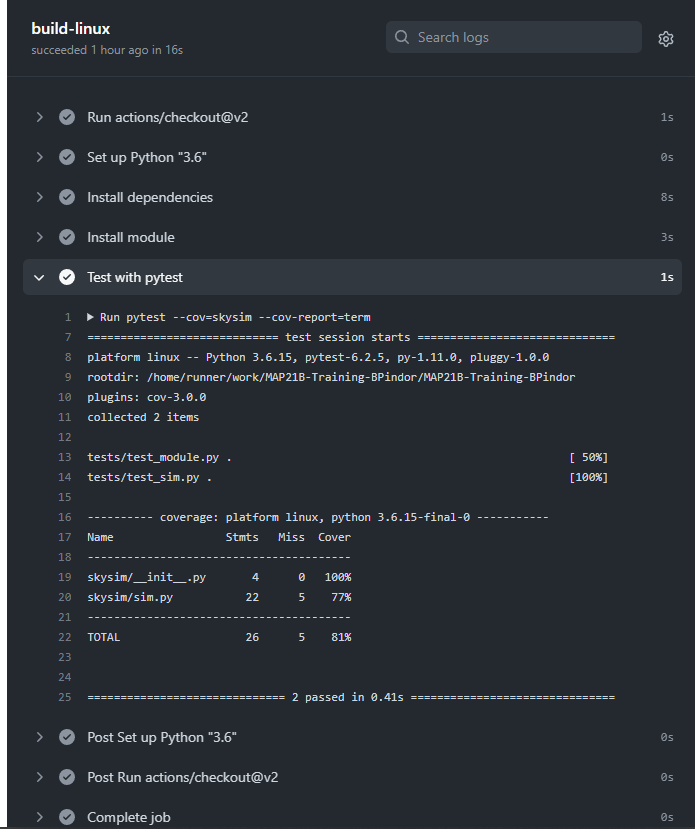

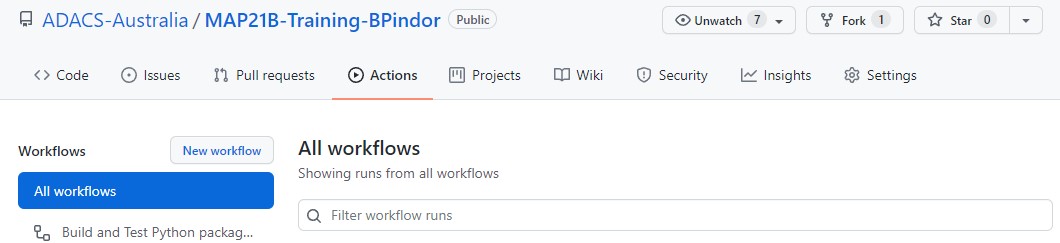

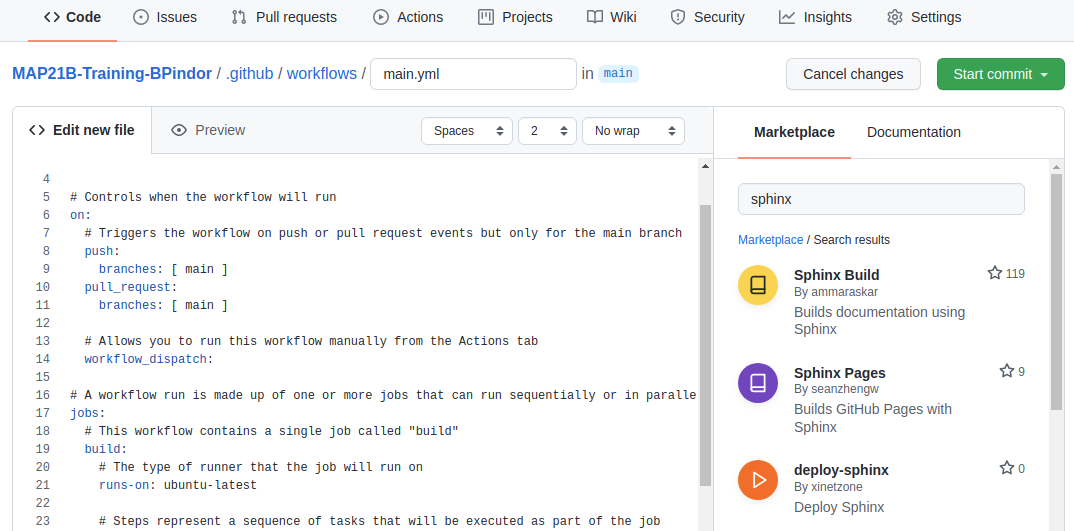

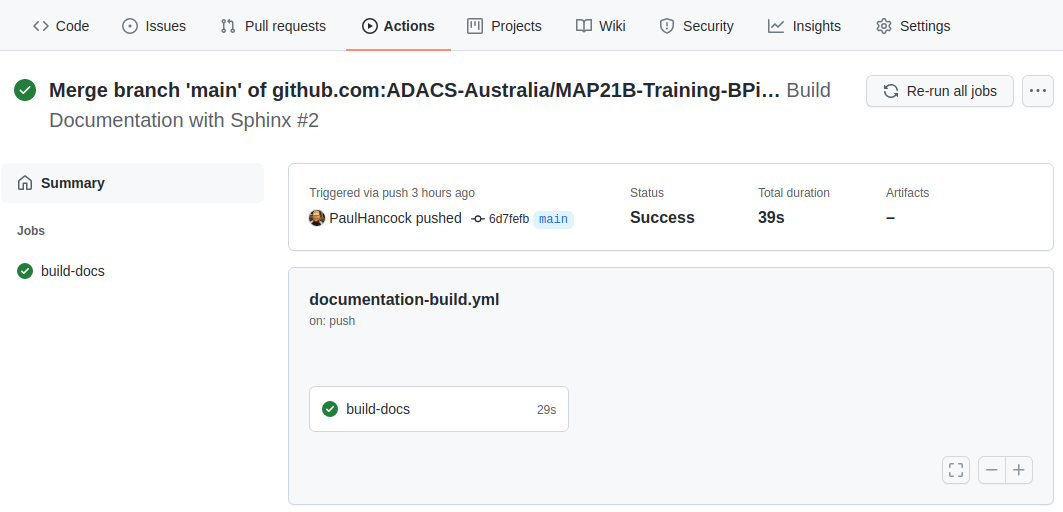

make Github do most of this work for us using Github actions.)

Once our reviewer(s) are happy with the changes we can merge our branch back into main by pressing the green button. This will create a new commit on the main branch in order to do the merge, so we’ll be asked for a title/description for the commit. It is pre-filled for us. Once the merge is complete Github will let us know that all is good, and suggest that we delete the branch. Since the feature is merged we no longer need this branch and will delete it.

Closing the issue

If we navigate back to the issues tab, we’ll see that the issue

related to the pull request has also been closed for us. This is because

we wrote resolve #1 as a comment for our commit. When that

commit is merged into the main branch git will automatically resolve

(close) the linked issue. If we didn’t use this smart linking capability

we can still go back to the open issue and close it. Either way it would

be good to leave a note about the issue being fixed.

Summary

The development cycle for fixing a bug is as follows:

- identify bug

- report bug on the Gihub issue tracker

- confirm that the bug exists

- create a feature branch

- write a test to expose the bug/error

- fix the bug

- run all tests

- commit changes

- create a pull request

- merge the branch into main and delete the feature branch

In this example we had one person doing the reporting/fixing. Usually you’ll have an end user doing the finding/report part, and then one or more developers doing the remainder.

GROUP Activity: Working on the same problem

In this activity you will practice working in a team as you go through a simple development cycle.

- As a group, identify a minor issue with your current project. (Don’t feel bad, the only projects that don’t have issues are the ones that no one is using).

- Since the focus here is on the life-cycle of a bug, choose a small thing that is easy to identify and fix.

- A function without a docstring would be ideal (and probably easy to find).

- Have one team member create an issue that describes the problem and explains what a solution would look like.

- eg “myFunc(4) should return a filename”, or “myFunc should have a

docstring in

”. - Create a new branch for development work called

or Issue . - Assign at least two team members to work on this issue.

- Have one team member create a solution for the issue and then:

- Push the changes to the remote repository,

- Open a pull request to merge this branch into main,

- Set the assignees to be all the members working on the issue,

- Set the reviewer to be a team member who is not working on the code (if you have enough people, otherwise choose someone other than the pull request creator), and

- Add whatever labels look appropriate.

- Have the other team members:

- View the changes via the “files changed” tab of the pull request,

- Comment on what is good and what needs improving,

- Pull the active branch to their local machine,

- Make an improvement and commit the change, and

- Push the change back to the active branch.

- Everyone should have an opportunity to make comments and changes, but you’ll need to coordinate who is doing what and when to minimise the number of merge conflicts that occur.

- Once everyone has had a chance to view/comment/change, have the reviewer sign off on the pull request and merge the changes into the main branch.

Content from Making Code Reusable by You and Your Group

Last updated on 2026-02-25 | Edit this page

Estimated time: 180 minutes

Overview

Questions

- How do I easily share or re-use my code?

- When sharing, what information should I provide?

- Where and how can I easily share code?

Objectives

- Package your code into a module.

- Create a command line interface for your code.

- Identify and include meta-data in your module.

Separating the UI from the functionality

A programming principle called model-view-controller

(MVC) is recommended when designing code. Under MVC, programs are

separated into one of three components, the model (library) which

provides the core functionality, a viewer which provides a visualisation

of the results, and a controller which links the user to the model. In

our test code the model would be the two functions

generate_positions and write_file, whilst the

controller would be the command line interface. [In this example we

could consider the file output to be the viewer.]

At the end of cycle1 we had a single file, sim.py, which

contained both the model and the controller. Our first task will be to

separate this into two parts. The first part will be a python module

which provides the functionality, and the second will be a script which

receives user input and calls the library functions.

Creating a python module

Python modules, like the numpy module that we have

already used, can be easily created by obeying a simple directory/file

structure. If we want to create a module called skysim then

all we need to do is create a directory with the same name, and add an

empty file called __init__.py. Let’s do that now:

To access the module we simply use import skysim.

BASH

$ python

>>> import skysim

>>> dir(skysim)

['__builtins__', '__cached__', '__doc__', '__file__', '__loader__', '__name__', '__package__', '__path__', '__spec__']

>>> We can see that the skysim module already has some

attributes defined, and they all start with a double underscore (

__ or ‘dunder’ ). The file attribute is a

string containing the full path to the file __init__.py.

The __name__ attribute will contain the string

skysim because this is the name of the module. If we had

renamed the module on import (using import skysim as other)

then the __name__ attribute would still be the same. Feel

free to explore the other attributes.

In order to add some functions or attributes to our module we can

simply add our sim.py file to the skysim

directory. If we do this and then restart our python interpreter we can

import all the functions/modules/variables provided by

sim.py by doing from skysim import sim. For

example:

BASH

$ python

>>> from skysim import sim

>>> dir(sim)

['__builtins__', '__cached__', '__doc__', '__file__', '__loader__', '__name__', '__package__', '__spec__', 'argparse', 'generate_positions', 'math', 'np', 'write_file']

>>> Above we can see the two functions that we defined, as well as the

modules that we imported (argparse, math, and

np). We now have a library that we can import. When we

import a module, all the code within that file is executed. In the case

of our sim.py file this means that we import some other

modules and then define some functions.

If a python file is run via python myfile.py then the

__name__ attribute will be set to the string

__main__. However if a python file is imported via import